My Bot Got Banned for Being a Bot

A few weeks ago I pulled out my old work laptop with a half dead battery, hooked it up to a charger, and installed Openclaw to start experimenting with my own personal agent.

Since I didn’t want to risk setting it up with access to any real personal data, I created a new Gmail account for my bot and gave delegated read-only access to my email and calendar so I could start experimenting.

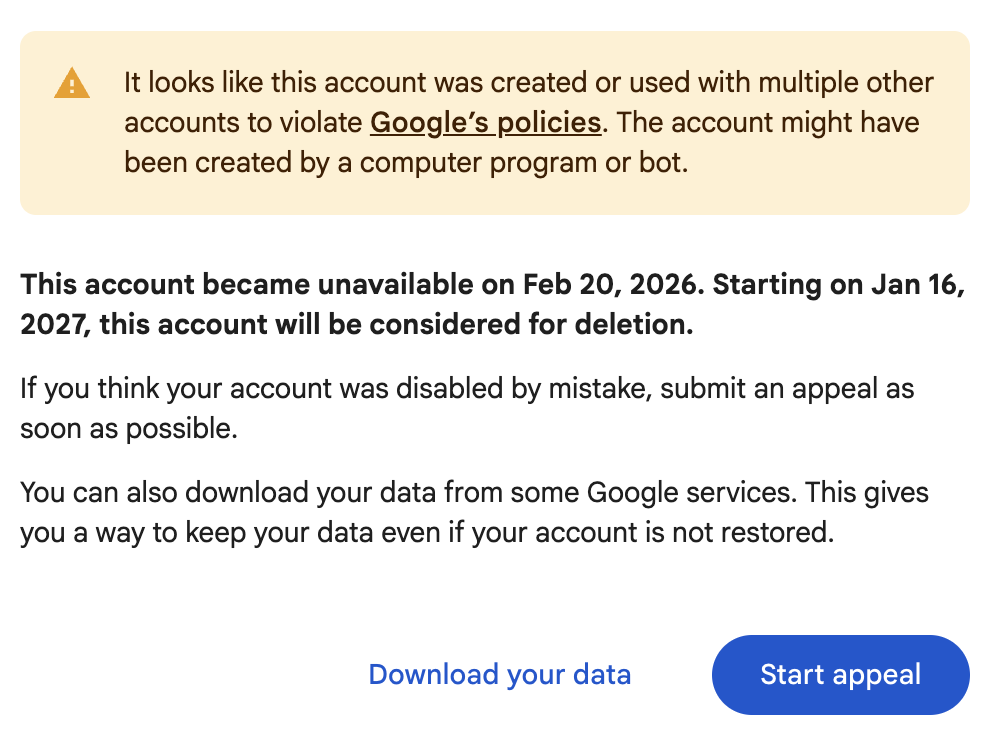

This went great for a bit, until suddenly it stopped working. Why?

Well, it turns out Google doesn’t like bots, and my bot got disabled for being a bot. It’s hard to really appeal - while the account wasn’t created by a bot it was definitely operated by one. But it feels very different from the kind of automated mass account creation and automation this policy was no doubt meant to prevent.

This is not a Google problem but an industry-wide challenge. How do we welcome agents to our platforms without enabling abuse by bots? For a long time, industry best practice has been implementing captchas, phone verification, bot detection heuristics and similar forms of abuse prevention on our platforms through automation.

It’s something we’ve been thinking a lot about at Netlify as we’ve made AX the north star of our product and keep pushing the frontiers for how to give agents easy access and welcome agent <-> human collaboration on our platform. Across the industry we are starting to see Agents as a new user type and persona.

But all the heuristics and roadblocks we’ve built for bots tend to impact agents equally. How do we distinguish between the two? How do we allow people’s Clawds to sign up and use our services on their behalf? To collaborate and publish together? While keeping large scale SEO spam, phishing services, automated bitcoin miners, and the like off our platforms?

The founder of Resend ran into a similar problem with his own service, when he was unable to get his own newly setup Openclaw to login to Resend because of the bot protection, and he promptly made the team rip it out.

Generally our fraud protection is going to have to shift from heuristics around “is this user a bot” to heuristics around “is this user doing bad things with our platform”, which is not always trivial. But the alternative is losing out of a whole new, massive audience of autonomous agents and the humans that do their work through them.

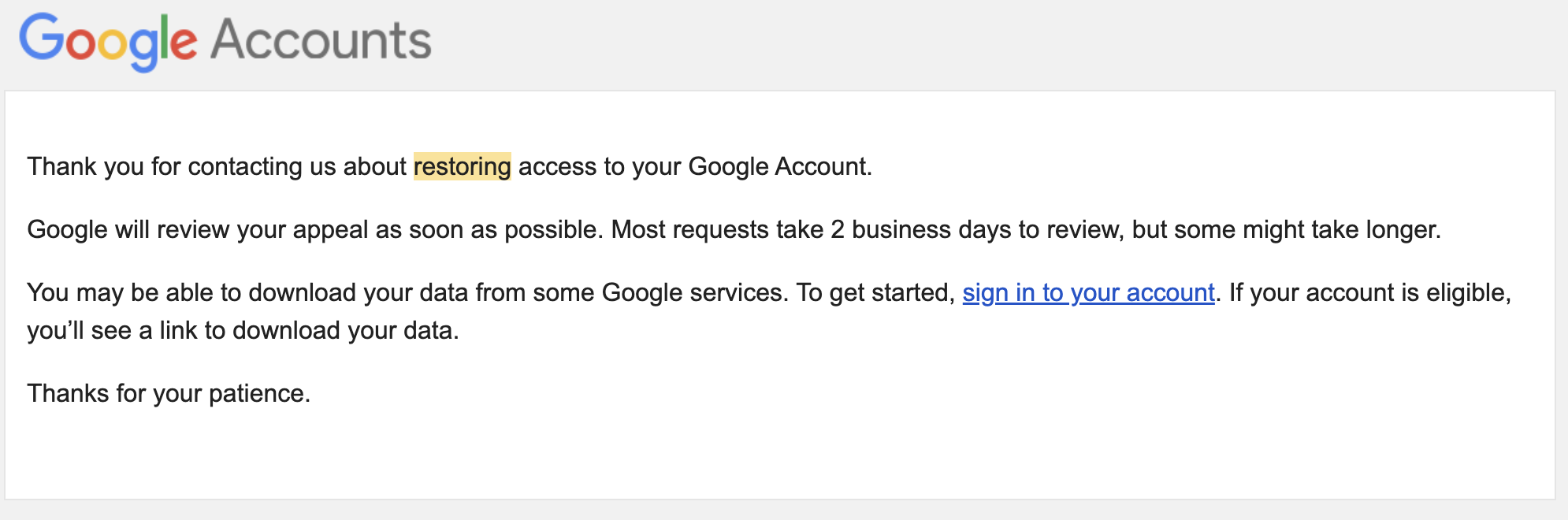

Google has realized the same, and I will say I was actually surprised when I submitted an appeal to reinstate MBot’s new Gmail account, and quite promptly received a positive response:

This shows that Google fully understands that someone’s Agent is not the same as a bot, and that they want the business of my Openclaw.

That said, anytime I open that email account, I still get both a Captcha, several images where I’m asked to identify motorcycles, bikes, busses, etc, a biometric check and a text message, before they let me in.

All of this is no doubt going to change in years to come. As an industry we’ll need to build real standards to allow Agents to identify themselves as actual legitimate actors operating on behalf of someone. We’ll have to rethink our layers of bot protections, rate limits, and fraud mitigations to allow agents to automate the operation of our systems on behalf of their humans.

Ultimately, companies will need to either build for agents and create their own AX practice, or become irrelevant as personal agents become commonplace and a preferred way to interact with the virtual world.